Design sprint tools for third-party scrutiny

Cities increasingly use algorithms to govern daily life and support decision-making. At the same time, algorithmic decision-making can harm people’s fundamental human right to autonomy. Can we develop AI that is open and responsive to dispute? Our team designed a speculative dashboard for people to scrutinize a specific algorithmic system.

The Dutch government aims to ensure that algorithms are implemented and used within legal frameworks and improve oversight of algorithms and data.

At Responsible Sensing Lab, we believe that algorithms used by the government should be easy to oversee. In addition, it remains a core principle of our democratic society to be able to scrutinize governmental procedures and systems.

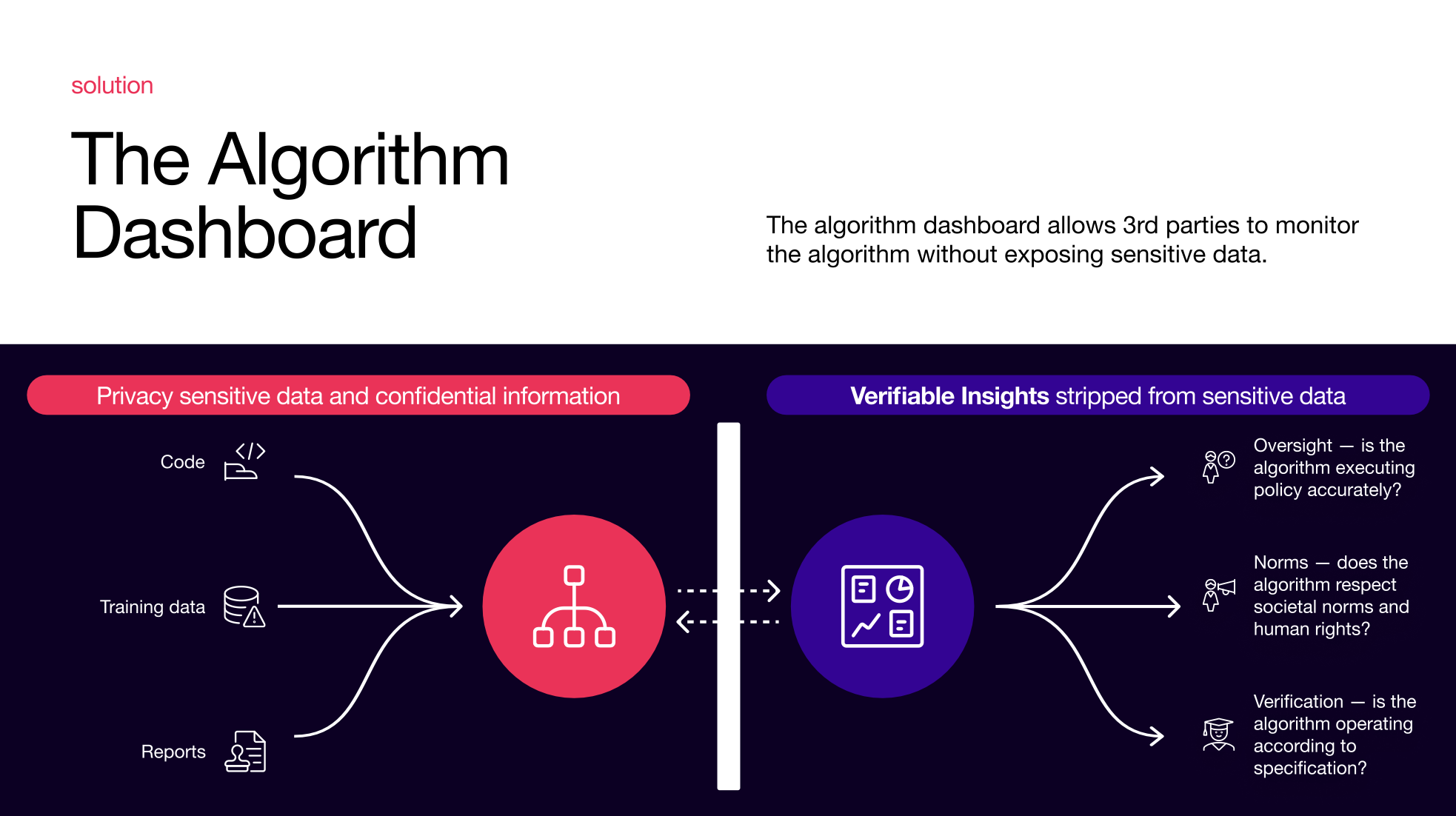

The challenge: How can we–as a society–investigate algorithmic outcomes to monitor effectiveness, discrimination, or arbitrariness without exposing privacy-sensitive or confidential information?

A contextual example: enforcement based on algorithms

Classic enforcement

Administrative employees decide how to enact laws to enforce policy rules. The effects of this enforcement can be affected by political action, which subsequently shapes new laws and accompanying enforcement norms.

Algorithmic advice

Administrative employees implement rules in an algorithmic system that supports enforcement—for example, prioritizing who to target for inspection. The effects of this enforcement are more challenging for citizens since the enforcement's algorithmic component is a black box.

How can concerned citizens understand how the algorithm implements the rules in this case? Where can they go with their concerns?

Features of contestable AI

In his article on contestable AI published in Minds and Machines, Kars Alfrink proposes several system features that enable the contestation of AI. These features include built-in safeguards using formal constraints, traceable decision chains, means for intervention requests, and tools for scrutiny. During our design exploration, the team focused on the latter.

Tools for scrutiny provide information about AI systems that outside actors, such as watchdogs, NGOs, journalists, and politicians, can use to ensure systems operate within acceptable norms and to appeal for system changes if necessary.”

— Kars Alfrink, PhD candidate, TU Delft

A dashboard for people to scrutinize algorithms

A dashboard can help third parties to monitor the workings of an algorithm. Concerned citizens can turn to them to understand how the rules are implemented and are informed sufficiently to try and affect the implementation.

We set up a design trajectory with researcher Kars Alfrink (TU Delft) and The Incredible Machine to explore how we could enable this monitoring by third parties.

The result of this exploration is a concept design of a dashboard for people who oversee the government (e.g., council members, watchdogs, and civil society groups) to scrutinize a specific algorithm

Scrutiny challenges

How can you monitor an algorithm if you cannot see the processed data? We created three concept designs:

- 1. Audit

- 2. Twin

- 3. Watchdog

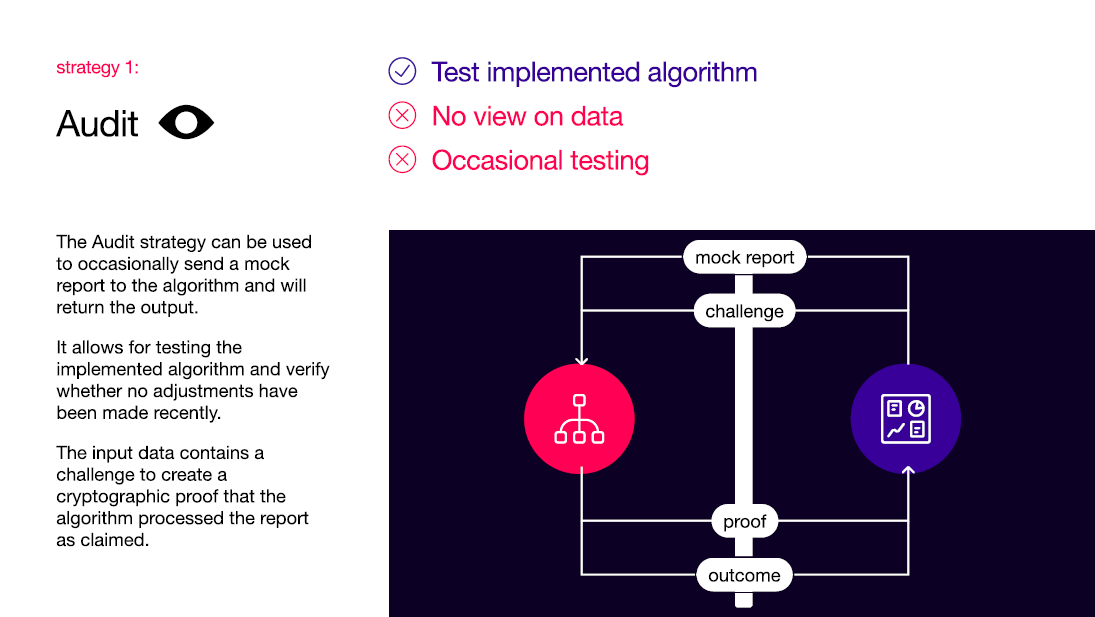

1. Audit

Responsible Sensing Lab Audit - The audit concept system enables the occasional sending of a mock report to the algorithm, which returns the output. It allows for testing the implemented algorithm and verifying whether no adjustments have been made recently. The input data contains a challenge to create a cryptographic proof that the algorithm processed the report as claimed.

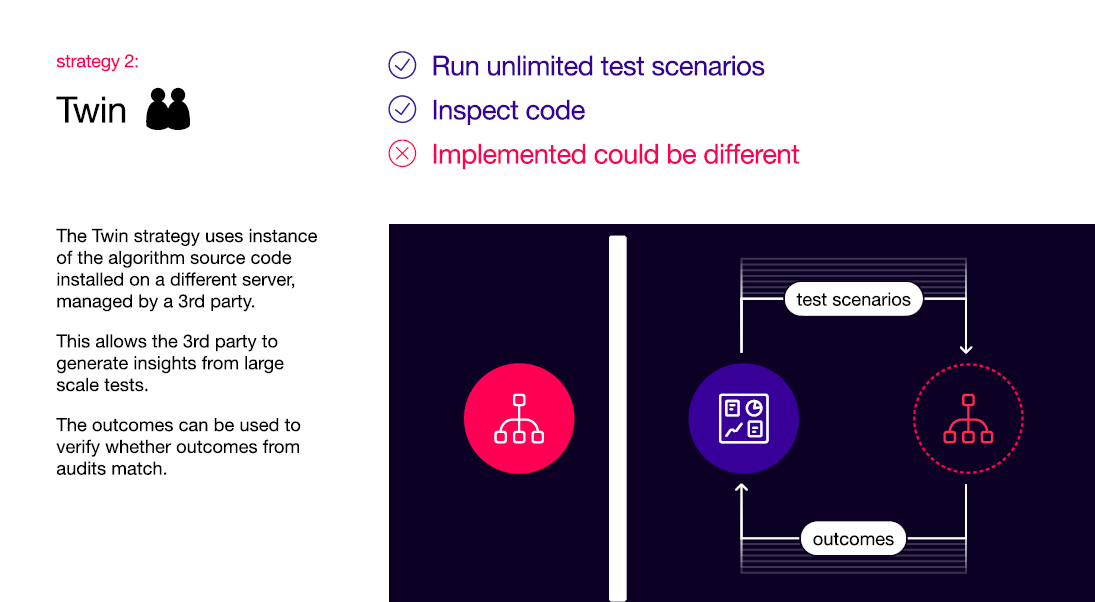

2. Twin

Responsible Sensing Lab Twin - The twin concept uses instances of the algorithm source code installed on a different server managed by a third party. This code allows the third party to generate insights from large-scale tests. The outcomes can be used to verify whether the results from audits match.

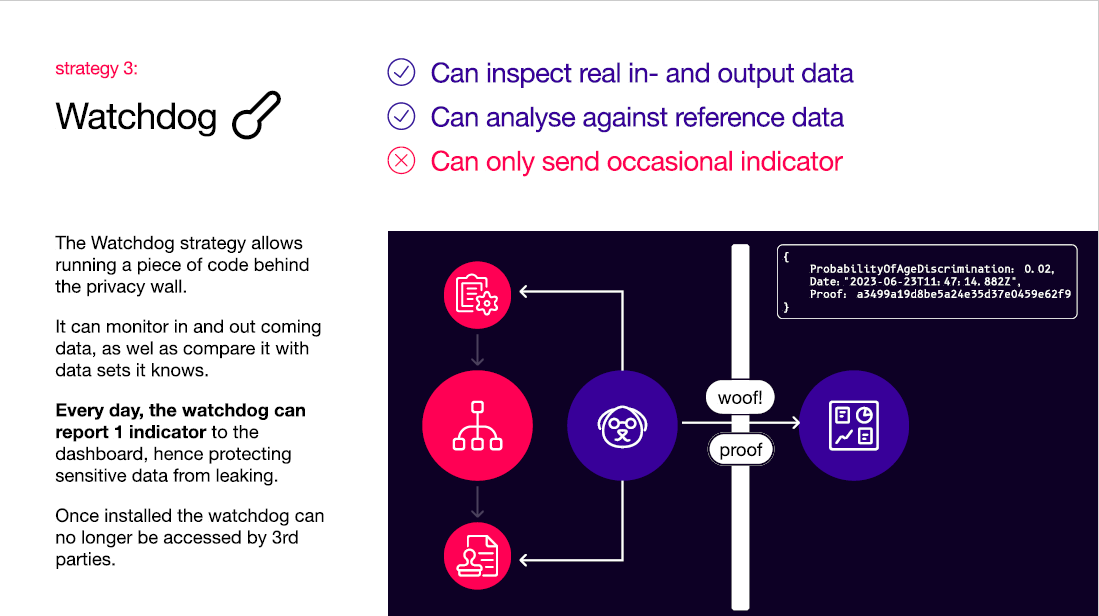

3. Watchdog

Responsible Sensing Lab Watchdog - The Watchdog concept allows for running code behind a privacy wall. It can monitor incoming and outgoing data and compare it with data sets it knows. The watchdog can report one indicator daily to the dashboard, protecting sensitive data from leaking. Once installed, the watchdog can no longer be accessed by third parties.

We presented this speculative dashboard design to council members, watchdogs, and academics during a workshop at AMS Institute. The design concept shows how monitoring algorithms while safeguarding sensitive data is possible. This approach requires thorough involvement and collaboration between government parties and algorithm programmers. Also, an extended commitment to privacy is necessary, and standards are needed to realize models for scrutiny.”

— Fabian Geiser, Project Manager, Responsible Sensing Lab