Interview Ibo van de Poel

We interviewed prof. dr. ir. Ibo van de Poel, professor in Ethics and Technology at Delft University of Technology.

Hi Ibo, could you tell us more about your work?

For the past 25 years, I have been working on ethical questions around technology. Most of my work is about Value Sensitive Design or Responsible Innovation. A central theme is: how do you include ethics or moral values from the very beginning - or in early stages - in the development of technology? Instead of developing the technology first, and thinking about the moral consequences of this technology at a later stage. Here is a clear connection with the work of the Responsible Sensing Lab.

Biography

Ibo van de Poel is Antoni van Leeuwenhoek Professor in Ethics and Technology at the School of Technology, Policy and Management at TU Delft. He did a master in Philosophy of Science, Technology and Society (with a propaedeutic exam in Mechanical Engineering) and obtained a PhD in Science and Technology Studies from the University of Twente.

Van de Poel’s research focuses on several themes in the ethics and philosophy of technology: value change, responsible innovation, design for values, the moral acceptability of technological risks, engineering ethics, moral responsibility in research networks, ethics of newly emerging technologies, and the idea of new technology as social experiments.

As a professor, you are working on Responsible Innovation. How would you define that?

Well, there are two, or perhaps even three definitions.

One way of defining the concept of Responsible Innovation is in terms of the process. In scientific literature there are a few criteria. It has to be:

- Anticipatory - anticipating potential societal consequences.

- Inclusive - developed together with stakeholders.

- Reflexive - which goals do we try to achieve and which values do we include?

- Responsive - it has to answer societal questions and respond to new insights and developments along the way.

The second definition is related to the school of Value Sensitive Design. It holds that one should start with moral or societal values, operationalize these and design these into products.

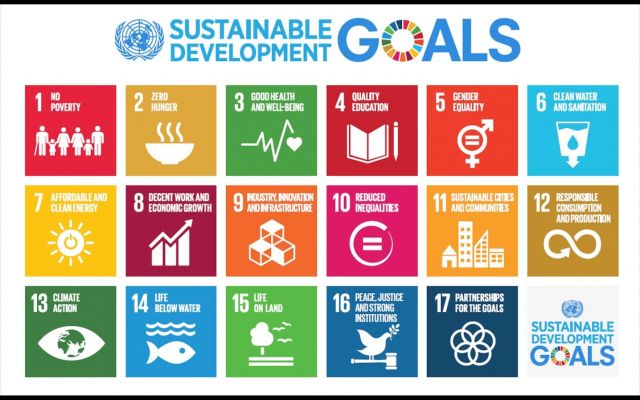

Recently a third definition is distinguished: the idea that innovation should be based on societal challenges. A mission driven approach. For instance: the Sustainable Development Goals of the United Nations.

These definitions are not strictly separated, and of course they can not exist without each other, but they have a different approach. You often see that political scientists and sociologists mainly use the first definition, while the second definition is more common amongst philosophers of technology or ethicists.

Is there a tension between definition one (focusing on the process) and two (operationalizing values) or could these go hand in hand?

Thinking from the perspective of either of these definitions you could criticize the other. On the one hand you could organize the best process possible, but it could deliver a discriminating technology. Following the best process doesn’t always lead to a good result.

The other way round: it is politically naive to assume that you can think about societal values without taking the societal process into account. This way of thinking leads to ending up with your own preconceptions.

For the RSL this is interesting. We have been tasked to explore how to operationalize values (such as the Tada values) into smart city systems, yet we are aware that actual decisions need to be made by the city council for them to be democratically legitimate. What mandate do we - as bureaucrats - have to start embedding values in technology?

Firstly, your work is democratically legitimized by a political decision in the coalition agreement to operationalize the Tada values. Secondly, I think that Value Sensitive Design and similar approaches already cover the involvement of stakeholders within the process, so giving even more attention to participatory processes will further increase the legitimacy to the outcome. The ethical perspective further emphasizes the involvement of underrepresented groups. In practice this isn’t easy, but it should be an ambition.

One of the subjects that we collaborate on, is the idea of value driven design. Our focus is the urban context. Does the urban context differ from other contexts, and if so - how?

The idea of value driven design in terms of the philosophy and methodology behind it, is largely independent of the domain that it is applied on.

I think that any technology development would need to be value-driven, but I would say this is even more important for the city, as a core feature of the city is that it is public domain. And there are really a lot of stakeholders. Once an urban technology comes into being it will have consequences, for instance on how we interact with each other.

Value sensitive design started in IT and often has a product focus. With product development, you have the possibility to ‘start from scratch’ and embed values there. However, the city is a socio-technological system that already exists. You never really start designing from scratch, but atop of what is already there, designing interventions to the existing system.

I think this requires some more scientific consideration. What does it mean to intervene in a yet existing system, in which the other elements are also being affected?

Part of our focus at the Responsible Sensing Lab comes from the assumption that - with the increasing deployment of digital technology - a concentration of power is arising that could become dangerous. And that is why we explore how to design urban technologies in such a way that we limit what they can do by design, so certain uses will simply be impossible. Shuttercam is an example of this way of thinking.

However, in an earlier conversation you introduced the idea of ‘designing for responsibility’ as opposed to ‘designing impossibility’. Could you give an example of this concept?

In ethics of technology, the word ‘capability caution’ has been coined. This refers to the idea that technology shouldn’t be able to do more than what it is designed for. The idea is that this makes it more difficult to misuse the technology or use it for bad purposes. Additionally, you may also want to design a technology in such a way that it cannot be misused; so you may try to steer behavior through technology. A traditional example of the latter is the chain saw. It has deliberately been designed so that you need two hands to use it. This makes it nearly impossible that you saw off your hand.

A more recent example is ‘intelligent speed adaptation ‘ - the practice of remotely enforcing speed restrictions on cars, scooters or e-bikes in certain areas. However, situations could occur in which it would be a good choice to neglect the speed rules. In order to prevent an accident, for instance - but that is no longer possible. ‘Designing for responsibility’ would allow this. It would encourage people to take responsibility rather than restricting them.

The idea behind the concept of ‘designing for responsibility’ is that as we cannot fully predict the future, unexpected situations will always occur in which limiting a functionality may cause harm.

There is also a philosophical perspective on this that focuses on the idea of morality. Kant says that individuals can only act morally if they have the choice to act immorally as well. In other words: it should be a conscious and voluntary choice to choose right. If you deprive people of their right to freedom they will not be able to deal with the situation any more, and take no responsibility and will not learn and develop as individuals.

When should you apply behavioral steering, and when should you design for responsibility?

This depends on the situation. It should be a careful consideration. In the example of the chainsaw I would say the set limit is the right choice, the design is good in its context. But in the example of enforced speed restrictions, my feeling is that you are limiting people’s autonomy too much.

Designing choices ‘away’ brings risks. Take the example of recommender systems, for example in the Toeslagenaffaire (the tax rebate controversy at the Dutch tax authority). The system that was used did not encourage people to take responsibility. It worked the other way round: people have to explain why when they deviate from the recommendations that they were given by the system. That is partly why things might have gone wrong.

One of the ideas in the field of responsible artificial intelligence is to build a system that offers the opportunity to have a dialogue, to ask questions to the system. This stimulates people to think for themselves. Designing for responsibility means designing a system that gives people the option to act responsibly, while giving them the required information and time they need to take responsibility. This corresponds to the insight from psychology that people will not think for themselves if the information is offered in a certain way.

This is much better than a system that says: this is how you should do it. If you do not give any reason or consideration to deviate, people will generally follow the current norm. We have to do the exact opposite of making it easy.

An example would be autonomous weapons: there is a rule that a human should be somewhere in the loop of decision making. This can lead to situations that formally comply with this demand, but for instance someone is not given the required amount of time. In this situation, the given responsibility is meaningless in practice.

So what we usually do is give people medicine with a package leaflet. But we know that people don’t read it. Instead, we could also decide to give them just the medicine, and the option to ask questions. This will enhance responsibility.

Perhaps. Well, we should consider the question: how much responsibility should people take? As this varies from decision to decision. The next question is: how do we design it?

For instance, in the example of the package leaflet it could be very important that people are aware of a certain side-effect. You could decide: they have to watch a video about this side-effect before they are allowed to take the medicine. This is just a silly example.

This is what we call informed consent. The same goes for cookies. We know 99% percent of the people don’t read them. However, this is slightly changing with the GDPR, which forces people to give consent more consciously. This illustrates that you are able to play with it.

At the same time, I am somewhat tired of making the same trivial choices over and over again. I compare this to my marriage: I have given my wife my consent to marry me. Of course we thought about it first, we organized a formal ceremony to celebrate it, but it was a one-time decision.

Couldn’t we do this with our personal data too? Taking a day to think about how our personal data should be handled in life, celebrating a personal-data-ritual, and you’re done for the rest of your life. Likewise, I am not arguing with my wife about our properties and to whom they belong on a daily basis.

Did you know there are companies that actually do this? They offer services that give you the option to decide at one time with which companies you want to share your data. Of course this choice is open for review, but you do not need to confirm this on a daily basis.

This is an example of designing from the user’s perspective. You choose less often, but more consciously. In this sense, you enhance people’s autonomy. From the philosophical perspective, cookies or the package leaflet are more appearances of autonomy than actual autonomy. This is exactly why you should think about these options.

Publications & grants

In 2010, Ibo van de Poel received a prestigious VICI grant from the Netherlands Organization on Scientific Research (NWO) for his research on new technologies as social experiments, and in 2018 he received an ERC Advanced Grant on Design for changing values: a theory of value change in sociotechnical systems. He is also one of the six PIs of the 10-year Gravitation project Ethics of Socially Disruptive Technologies.

Ibo van de Poel's latest publication is called "Understanding Technology-Induced Value Change: a Pragmatist Proposal." Find all Ibo van de Poel's publications here.

What should we focus on as the Responsible Sensing Lab, in your opinion?

Well, in the first place I think it is very valuable that you are exploring value-driven design, as this is quite rare in the world.

However, a significant part of public space is designed by commercial companies that provide technology. There is not much of an influence you have there. As a city, you could have a say in determining which technology is allowed, or how it should be used, but it is hard to affect the design.

Perhaps you could think about working more closely with commercial companies from an urban perspective.

What is also interesting to think about is how you design the process in close consultation with citizens. How do you involve them in a way that is meaningful and significant? In my experience, there is always room for improvement here.

Last question: could you give the readers a little glimpse of the whitepaper on ‘responsible smart cities' that you and Thijs are working on?

We formulated a list of around twenty values that should be the basics of responsible smart cities. But the challenge is: how to operationalize these values in technology design? They are open for different operationalizations, and some values conflict with each other. How to weigh values against each other in a systematic way?

Governments - often unknowingly- have experience in doing this in the form of a cost-benefit-analysis. But these usually include practical concerns, financial metrics and abstract values interchangeably. From a philosophical perspective it would be better to differentiate more between these. Implicitly, these assessments are yet being done But it is rare that values are considered specifically and in a systematic way.

We want to look at the process of operationalizing values, solving value conflicts and whether there are design patterns that more-or-less work in an urban context in general.

Sounds interesting. We can not wait to read more about this whitepaper soon. Thanks so much for your time!

We will update you on the whitepaper as soon as it is published. Keep an eye out!